Reproject on Cropping |

March 25th, 2023 |

| tech |

Projecting a 3D scene to make a 2D picture unavoidably introduces distortion, worse the farther you are from the center, so if you crop to just the corner of a picture you're getting a lot of distortion.

It would be possible to make this better: your phone knows the distortions its lens makes, and every time you crop something it could automatically reproject the image. I can approximate correction with a vertical shear:

Followed by a horizontal scale:

In writing this up, however, I realized that my phone's (Pixel 5a) camera app takes a different approach. Instead of waiting for you to crop an image, it automatically fixes faces itself. The pictures above were taken with Open Camera, which doesn't have these smarts; here's what I get with the default camera app:

It made the fix initially, not on cropping; the full-size image also minimizes distortion around my face:

Since this is only applied to my face, though, and not the rest of my body, it does give a slightly uncanny effect. Compare to the full-size version of the Open Camera image:

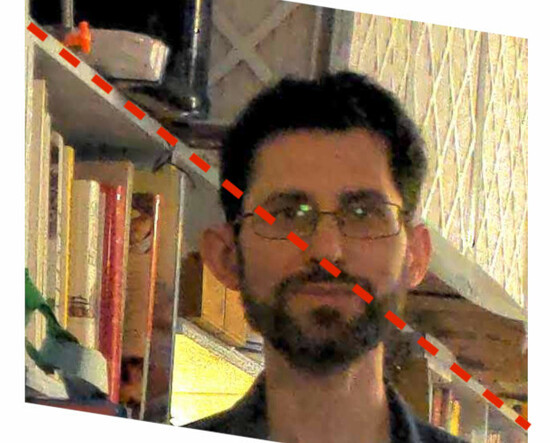

You can see what the Pixel camera app did around my face by looking at the line of the bookcase:

In real life and in a raw photo that line would be straight:

And in my manually corrected version from above the line is straight:

But because the Pixel camera is doing its correction on the whole image, instead of waiting for you to crop, it isn't able to preserve all the straight lines in the original image.

I think the Pixel edit is probably still worth doing, since we care a lot about faces and they do look more natural projected as if you were looking straight at them. Reprojecting the whole image to minimize distortion when you crop would also be a pretty nifty feature, and doesn't introduce the kind of distortions that fixing one part of a larger image does.

Comment via: facebook, lesswrong, mastodon, substack