SAT Coaching: What Effect Size? |

September 10th, 2014 |

| sat, statcheck |

[Pinker's] assertions about SAT score improvements are incorrect. I tutored SAT for about 5 years, at $80-$100/hr. With 12 1.5 hour weekly sessions, a student gets scores up by 60-100 points for math and 50-70 points for verbal. Either I am a genius and incredible tutor or this article is not well researched. — Harriet

Hundreds of points better? A seventh of a standard deviation? How is there such wide disagreement? First, how much disagreement is that? The SAT is scored out of 2400 these days and the standard deviation was 347 in 2013 (pdf), so Pinker is claiming coaching gives people a boost of 50 points on average. This is much less than my friends' claims of 1/2 SD (hundreds of points) being typical with their students.

There has been some research on the efficacy of SAT coaching, and that's where Pinkers's claim is coming from. Unfortunately it's all correlational:

-

Briggs 2001 (pdf) looked at the National Education Longitudinal Survey. This survey tracked 16,500 students with three surveys (1988, 1990, 1992) and included both PSAT and SAT/ACT scores as well as a survey question asking if students received any coaching for the SAT/ACT. This lets you see whether coached students did better on the SAT/ACT than you would have predicted from their PSAT scores. The raw "effect" of commercial classes is then 15% SD (30 points) [1] while a private tutor is 13% SD (26 points).

On the other hand, there are good reasons to think this is an overestimate. If students were randomly assigned to get test prep or not this would be about right, but instead there's the possiblity that whatever factors lead a student to try test prep also lead to better PSAT-to-SAT improvements. In fact, when the authors attempted to control for the various ways the test-prep and non-test-prep students were known to be different, the apparent effect dropped to 11% SD (21 points).

-

Hansen 2004 (pdf) also ran a similar analysis, this time of data provided by the College Board about 1995-6 test takers. Using a complex statistical technique ("Full Matching") that I don't understand well enough to assess, they find coaching gets people an average gain of 12% SD (27 points) [2]. This study relied on cooperation from the College Board (the SAT's publishers) and they have quite a lot invested in the idea that their test is prep-resistant. So this is more likely to be biased downward.

-

Buchmann 2010 (pdf) ran a similar analysis to Briggs, adding in a data from a few more years of the NELS and controling for somewhat different things. After controls they found commercial classes gain you about 15% SD (30 points) while tutors get you 19% SD (37 points). The authors seem to really want to find a larger effect so that they can claim that people who can't afford coaching are at a larger disadvantage, so I would expect these numbers to be biased upward.

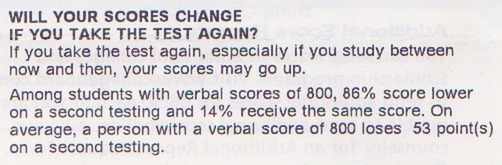

- People tend to get better on the test over time, whether or not

they prep in between. For example, my score went up 45% SD (100

points) between taking the test junior spring and senior fall.

The SAT score report even talks about how scores often go up on

a retake:

Without a control group, which SAT tutors won't have, it's very hard to separate gains from coaching from simple gains from waiting a while and getting older and smarter.

The studies don't have randomized control groups. Having a control group at all is better than nothing, but without randomization it's possible that there's some hard-to-measure factor that causes students to get test-prep and also causes higher or lower scores. If that hidden factor is "parental involvement" and leads to both prep and higher scores then coaching is even less effective than we thought, while if it's "students who were going to do badly want more prep" then it's more effective.

Most test prep is nearly useless, but the tutors I know were working with highly motivated kids with strict parents and this situation leads to higher than average gains. And this kind of test prep makes up a small enough fraction of coaching overall that it doesn't show up in studies.

The studies are on 20+ year old data, and we've gotten better at prepping people for the SAT since then or the SAT has gotten more coachable.

(Separately, the next SAT sitting is 10/11. I'm kind of tempted to sign up to take it to see if I can get the minimum score. Is that worth $52.50 and a Saturday morning?)

[1] This and later numbers are based on a total score of 1600 and a

standard deviation of 200, which was the target during this

period.

[2] This is from 1995-6 data, after the target standard deviation had been raised to 210.

Comment via: google plus, facebook, substack