Can Ads be GDPR Compliant? |

January 7th, 2023 |

| ads, cookies, privacy, tech |

I'm not a lawyer or an expert in privacy regulation; this is something I follow because I'm interested in it.

- I worked in ads until June 2022, but I'm speaking only for myself. I don't expect to go back into the industry.

So, how are sites not compliant?

When you visit a site in Europe, or an international site as a European, you'll typically see a prompt like this:

In this screenshot El País is asking for permission to use cookies and use your data to personalize ads.

Why are they asking you? A combination of two regulations:

The ePrivacy Directive (2002) which requires the site to get your consent before using cookies or other storage on your device unless they're strictly necessary to provide a service you requested.

The GDPR (2016) which tightly limits what companies can do with your data without your consent.

The idea is, if you click "accept" then they can say they had your consent for all the advertising stuff they do. But I think it's very unlikely this is compliant with the GDPR.

For example, in a recent case France's data privacy regulator CNIL recently fined Microsoft €60M (full text) for a similar popup on Bing. I'm going to come back to this decision later because it has other implications, but in paragraph 65 the CNIL ruled that their cookie banner was not collecting valid consent because it took more clicks to refuse cookies than to accept them.

The principle here is that for consent to be valid under the GDPR it needs to be just as easy to give consent as it is to refuse it.

This is not widely respected today, since for most companies it's going to be much more profitable to put up a not-really-legal banner that heavily pushes users towards saying yes and hope they don't get in trouble, but as the data protection agencies continue their enforcement I think this will become less practical.

Another approach you see on a few sites is the one that Der Spiegel takes:

They offer a choice between accepting their standard ad stuff or paying to subscribe to the site (more details). I'm glad they're giving users the choice here and I think this should be legal, but I'm pretty sure it isn't right now. The problem is that the user's consent isn't "freely given" in terms of the GDPR's Article 4(11) if they would otherwise have to pay for access.

The third option is to have a cookie banner that is as easy to reject as it is to accept:

When I click "deny" and visit their site, they show a popup saying

"Lower quality ads may be displayed." This includes (definitely low

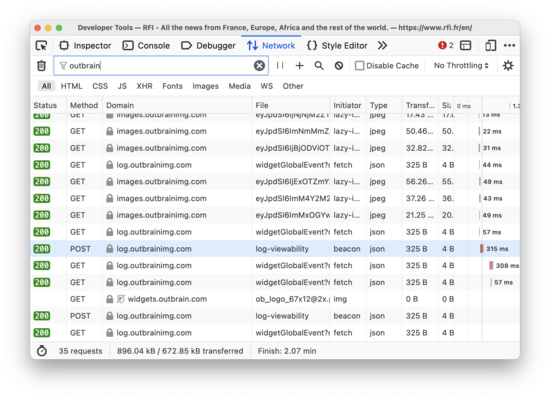

quality...) ads from Outbrain, with many

network requests to outbrain.com and

outbrainimg.com:

The problem is, per the Schrems II ruling these are also not GDPR-compliant. Because US companies are required to share information with the US government and IP addresses are personal information, the GDPR requires sites to get consent from users before sending any of their information to American companies or their subsidiaries. European courts have applied this ruling to fine sites for using Google Analytics, Google Fonts, and the Akamai CDN. Since Outbrain is an American company, based in NYC, this is not compliant.

Schrems II compliance rules out all commercially available adtech options I know about, and the only fully GDPR-compliant sites I've seen are ones where clicking "reject" means you don't get any ads at all.

As a somewhat speculative aside, I think there's another problem with these consent popups: when you visit the site they read your cookies. Per the ePrivacy Directive sites are only allowed to interact with storage on your device to implement functionality "strictly necessary" for a service you requested. If I visit a site and have never seen their consent popup before, I don't see how you argue that accessing my device's storage to check whether I've already been asked for consent meets that bar. On the other hand I've never seen someone make this argument and consent popups are everywhere, so it's probably too legalistic.

But anyway, let's say you decide to build your own ads system fully in-house, or a very careful European startup comes out with a GDPR-compliant display ads product. What would this look like?

The GDPR requires you to have one of several legal bases for any personal data processing. The most well known basis is "consent", but there are several others. Meta (Facebook) tried to work around this with the "performance of a contract" basis, but was fined €390M. The only basis, other than consent, that might apply is "legitimate interest".

Sites interpret this term in a variety of ways. For example, Spotify claims that they have a legitimate interest in "using advertising to fund the Spotify Service, so that we can offer much of it for free" and so "use your personal data to tailor advertising to your interests". This is unlikely to satisfy a European court: TikTok announced they would do this and then didn't, due to it probably not being legal. Nearly every site personalizes ads only for users who have (per their dubious popups) consented, and these are sites that have a strong interest in, and history of, interpreting the GDPR as loosely as possible.

If you can't personalize ads, however, that doesn't mean you can't show ads. The problem is that personalization isn't the only thing ads use personal data for. Let's talk about fraud.

If I wanted to sell some ad space on my site, there are a lot of things an advertiser might care about. The biggest one, however, is how many users are going to see their ads per dollar. We might agree that they will pay me $1 for every thousand page views ($1 CPM). With a naive implementation, at the end of the month I check my server logs, see that my site served 1M pages and bill the advertiser $1k.

No serious advertiser would agree to this, however, because it is so vulnerable to fraud. All it takes is standing up a little bot to repeatedly load my articles, and I can bill them for millions of visits when I was only visited by thousands of real users.

Instead, advertisers want the protection of fraud detection. This means collecting lots of user data as the ad is shown on the page and processing it with a combination of clever statistics and human analysis to identify and ignore the portion of traffic that doesn't represent real users. This requires catching not only simple bots like search engine spiders, but also sophisticated fraud operations involving rented botnets or giant racks of real phones.

Is it within the legitimate interests of sites to collect user data for ad fraud detection? The ad industry has historically thought that it was. For example, the IAB's TCFv2, the standard protocol consent popups use to talk to ad networks, categorizes ad fraud detection under "Special Purpose 1", with users having "No right-to-object to processing under legitimate interests". On the other hand, based on points 52 and 53 of the recent Microsoft ruling I would predict that French regulators would rule that since users do not visit sites to see ads, sites cannot claim that they have a legitimate interest in using personal data to attempt to determine whether their ads are being viewed by real people.

This is not settled; among other things the Microsoft ruling was primarily considering ePrivacy which is stricter on some points. But I think it's more likely than not that when we get clarity from the regulators it will turn out that the kind of detailed tracking of user behavior necessary for effective detection of ad fraud is not considered to be within a publisher's legitimate interests.

Giving up on using personal data for ad fraud detection would make online advertising much less profitable, but it might not kill it entirely. I see three ways this could maybe still work:

Performance advertising. Online ads are divided into two main categories: "performance", which is trying to get you to do something now (click through from the fishing site you're visiting and buy this new lure), and "brand", which is trying to influence your future purchases in a much less legible manner (drink more Coke). Brand advertising is where the biggest spending is, but because the purchases aren't tied to clicks it's very dependent on keeping down fraud. With performance advertising the advertiser can measure whether people really are buying things, so it doesn't matter so much how many bots are on your site.

Ratings. The way we used to handle this with TV and radio, back when large-scale tracking was impractical, was with companies like Nielsen estimating how many people a given broadcast reached. Their estimates were based on a mixture of automated and manual surveys, and only reached a small fraction of the population. While they worked somewhat well historically when media was highly centralized and there were only a few options, they are a poor fit for a current TV market let alone the far more fragmented web.

Private Browser APIs. Browsers could offer sites a way to verify that your users aren't bots without any personal data being sent off the device. Trust Tokens are one way this could work, but since most visitors wouldn't have them I don't think they're enough. Even though this is close to an area I've worked in I doubt there's a solution here: it's very hard to build something that hits all of (a) minimal load on the client, (b) sufficiently useful fraud detection, (c) sufficiently private, and (d) does not require consent under the GDPR or ePrivacy. The last one is especially hard: how could a private browser API not involve storing information on the client?

At this point, however, we're talking about a model of advertising that is far less able to support most sites than the status quo, and one that is only viable for very large publishers (Facebook, Reddit, NYT), or sites with a strong commercial tie-in (credit card reviews, home improvement, personal finance).

Overall I'm not happy about this conclusion. While I don't enjoy seeing ads I am glad they exist; as I've written before I think a world without ad-supported sites would be worse. It's not just me: when users can chose between ad-supported and paid options the former is typically the most popular option. Regulating the advertising business model to where it's no longer practical for most sites does not make users better off.

Ideally we'd see some changes to these regulations which balanced the privacy goals of the GDPR against the minimum necessary for ad-supported sites. Specifically, I'd like to legalize some things that are mostly already how many people seem to think this works:

Allow ad fraud detection under "legitimate interest", which is the key thing keeping ads from being practical under the GDPR.

Allow Der Spiegel's approach where sites can let users choose between ads with personalization or paying a reasonable price for access, under the principle that this is a real choice.

Slightly relax ePrivacy's "strictly necessary in order to provide an information society service explicitly requested". In practice this is means that nearly every site shows a cookie banner, even to do completely normal things like keeping an item in your shopping cart for later.

I'm less confident in my proposed solutions than I am about there being a problem, but I do think these three changes strike a good balance between the privacy goals of the GDPR and the financial, ease of use, and competition benefits of users being able to move from site to site without the friction of paywalls.

Comment via: facebook, lesswrong, hacker news, mastodon, substack